In the modern era, the development of Artificial Intelligence (AI), the line between what is real and what is fake has become increasingly blurred. Technologies once considered groundbreaking, such as deepfakes, generative AI models, and synthetic media, now exist at the intersection of innovation and potential deception. While these technologies bring unprecedented possibilities for creativity, entertainment, and productivity, they also introduce significant ethical, societal, and security risks. In this article, we explore the dynamics of AI-driven fakery, its implications, and how we can differentiate between genuine and fabricated content.

The Emergence of AI in Content Creation

Artificial intelligence has revolutionized content creation, allowing for the automation of tasks that were once time-consuming or impossible. Today, AI algorithms can generate highly realistic images, videos, and even written content. Some of the most impressive AI tools, such as OpenAI’s GPT-4, Google’s Gemini, and tools like Deepseek, are capable of creating artworks, images, composing music, videos, and simulating voices with uncanny accuracy.

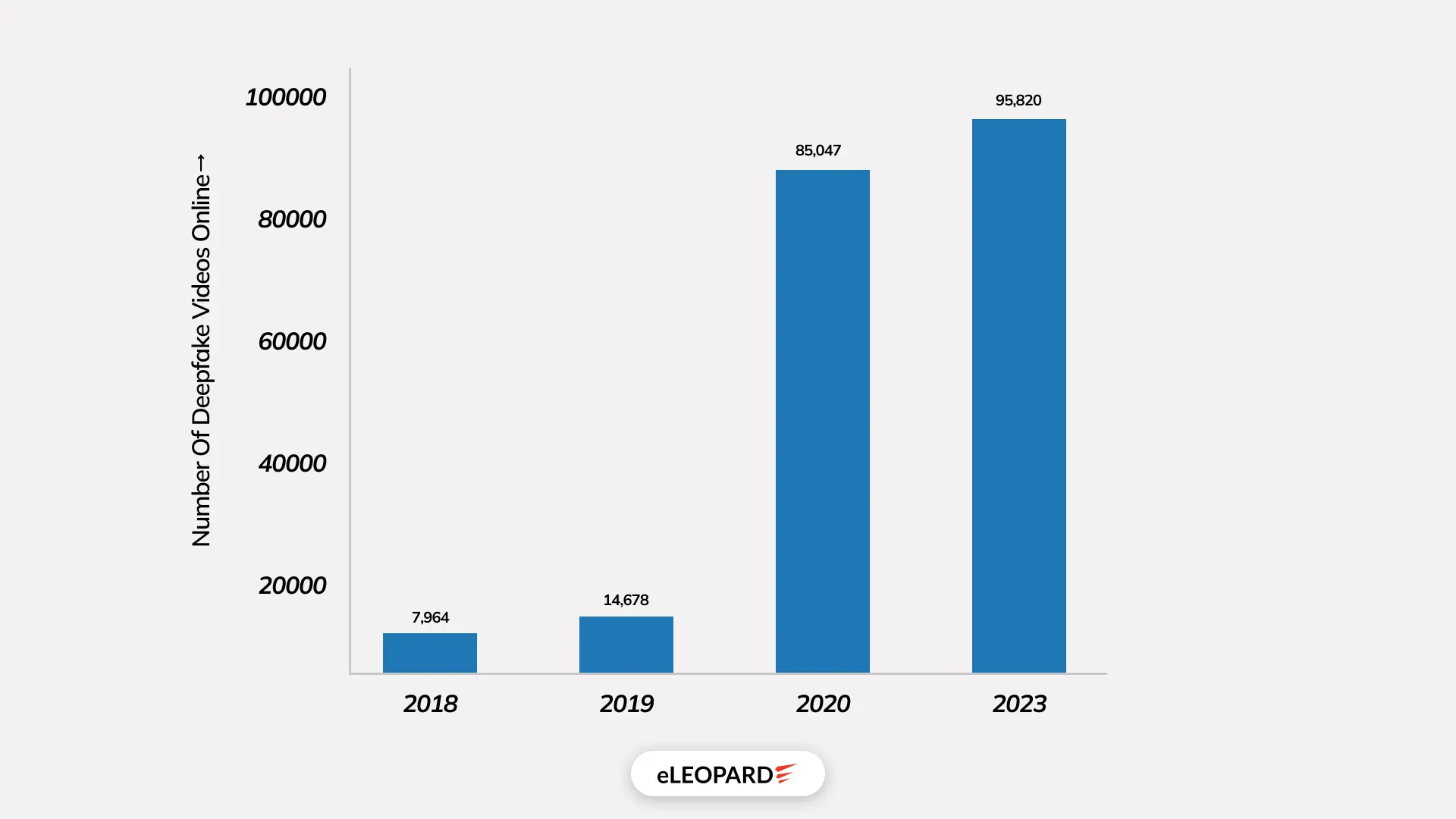

In particular, AI-generated images and videos, such as those created using Generative Adversarial Networks (GANs) or deepfake technology, have become so sophisticated that it is difficult for the human eye to distinguish them from real footage. For example, the infamous DeepFake technology, introduced in 2017, allows users to swap faces in videos, often with jaw-dropping realism. According to a 2022 study by Sensity AI, a leading provider of deepfake detection technology, 96% of all deepfake content online is x-rated, with more than 60% featuring non-consensual use of individuals’ faces.

However, while the potential for AI-generated media is immense, so too is the risk that it could be used maliciously, leading to the creation of “fake” content that deceives audiences.

Deepfake Technology: A Double-Edged Sword

The rise of deepfake technology has presented both immense opportunities and considerable threats. Deepfakes leverage GANs to create hyper-realistic, computer-generated images or videos that can make people appear to say or do things they never actually did. With enough training data, these systems can replicate the voice, mannerisms, and facial expressions of a person with extraordinary precision.

This technology has been used for various purposes, from creating viral videos and art projects to enhancing entertainment experiences. But on the darker side, deepfakes are increasingly used to spread misinformation, defame individuals, or manipulate public opinion, especially during critical events like elections.

In fact, according to the 2023 Digital Civil Society Report published by the Stanford Cyber Policy Center, 40% of online content related to political disinformation in the United States was generated using AI-powered synthetic media, including deepfakes.

One of the most notable instances of deepfake abuse occurred in 2018 when a video of U.S. Speaker Nancy Pelosi was doctored to make her appear intoxicated and incoherent. The video quickly spread across social media platforms, gaining millions of views before it was flagged as a fake. This incident highlighted just how easily public trust can be eroded by AI-generated deception.

The Evolution of AI and Its Capabilities

Artificial Intelligence has evolved from rule-based systems and narrow machine learning models into highly adaptive, multimodal architectures capable of reasoning across text, images, audio, and video simultaneously. Early AI systems were deterministic—limited to predefined instructions—but modern AI models rely on deep neural networks trained on trillions of data points.

By 2026, large language models (LLMs) routinely exceed one trillion parameters, enabling contextual understanding, long-term memory simulation, and autonomous decision-making in controlled environments. According to industry benchmarks published by McKinsey, AI-driven automation now influences over 45% of enterprise-level decision workflows, up from just 10% in 2020.

This rapid evolution has expanded AI’s usefulness—but it has also increased the difficulty of distinguishing between synthetic intelligence outputs and authentic human-generated content.

“Fake” vs “Real” Things Happening in AI in 2026

In 2026, the distinction between “fake” and “real” AI no longer lies solely in whether content is machine-generated, but in intent, transparency, and verifiability.

- “Fake” AI refers to deceptive or manipulative applications—synthetic media designed to mislead, impersonate, or fabricate reality.

- “Real” AI focuses on augmentation tools that enhance productivity, accuracy, creativity, and decision-making while maintaining transparency.

A 2025 survey by the World Economic Forum revealed that 62% of global respondents reported encountering AI-generated content they initially believed to be real, highlighting how normalized synthetic media has become.

The Emergence of “Fake” AI

Fake AI did not emerge because of technological failure—it emerged because of human incentives. As AI tools became cheaper, faster, and more accessible, malicious use scaled just as quickly.

Key drivers include:

- Political propaganda and psychological operations

- Financial fraud and identity theft

- Non-consensual synthetic media

- AI-generated misinformation at an industrial scale

Cybersecurity firms estimate that AI-assisted fraud losses surpassed $90 billion globally in 2025, a 35% increase compared to pre-generative AI years.

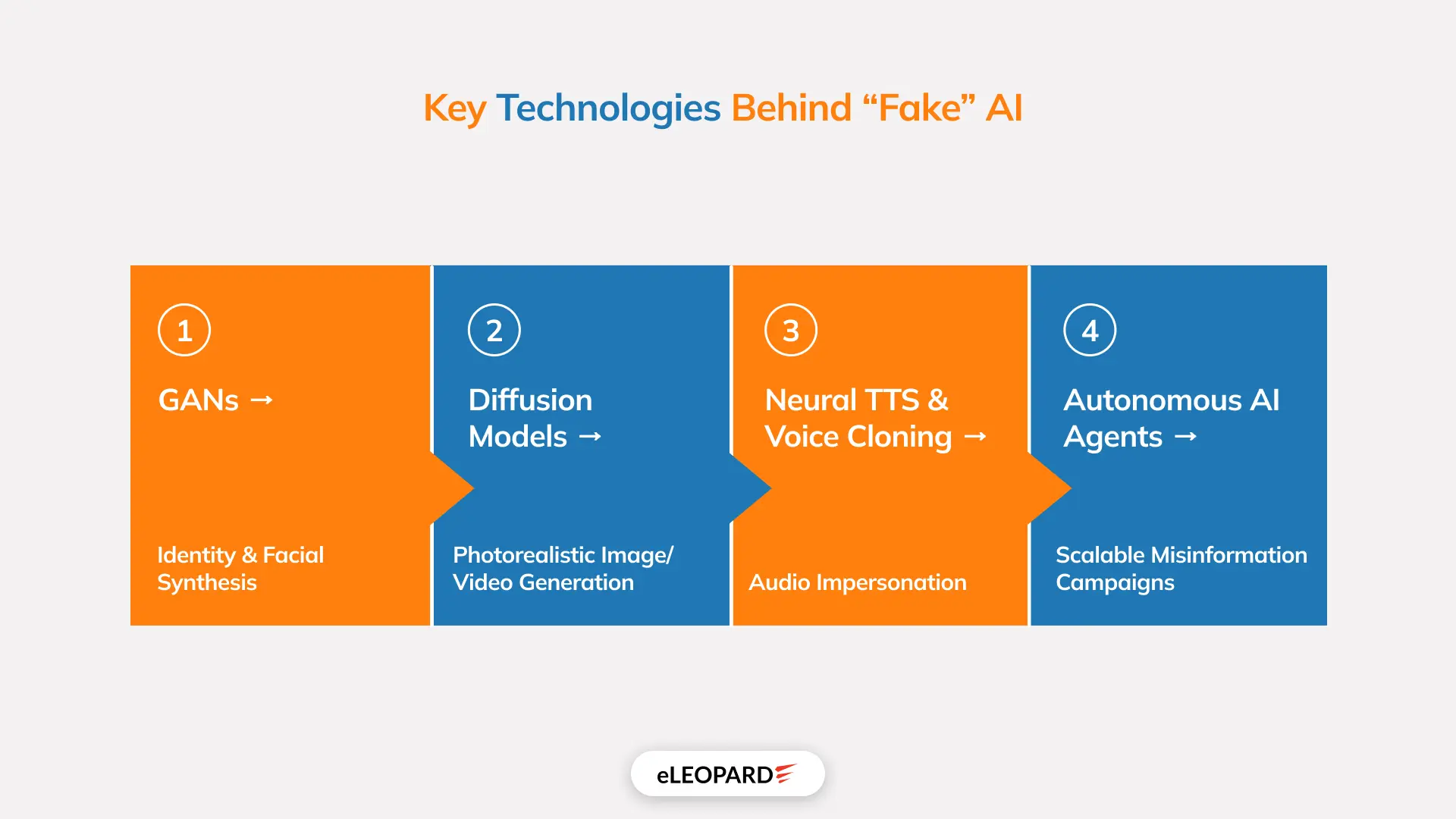

Key Technologies Behind “Fake” AI

Core Technical Stack

- GANs → Identity & facial synthesis

- Diffusion Models → Photorealistic image/video generation

- Neural TTS & Voice Cloning → Audio impersonation

- Autonomous AI Agents → Scalable misinformation campaigns

Why Detection Is Hard

- Models optimize against detection tools

- Outputs lack “human error” patterns

- Variations are generated at scale

The Impact of Fake AI

The most profound impact of fake AI is not misinformation itself, but epistemic erosion—the gradual loss of confidence in one’s ability to know what is true. When fabricated content becomes indistinguishable from authentic records, doubt becomes the default response to all information.

This erosion has cascading consequences:

- Legal systems struggle with evidentiary integrity

- Journalism faces credibility crises

- Democratic processes become vulnerable to perception manipulation

- Individuals experience psychological distress from identity misuse

Over time, societies risk entering a “post-verification” environment, where truth is negotiated socially rather than established empirically. In such a context, power shifts toward those who control narrative volume rather than factual accuracy.

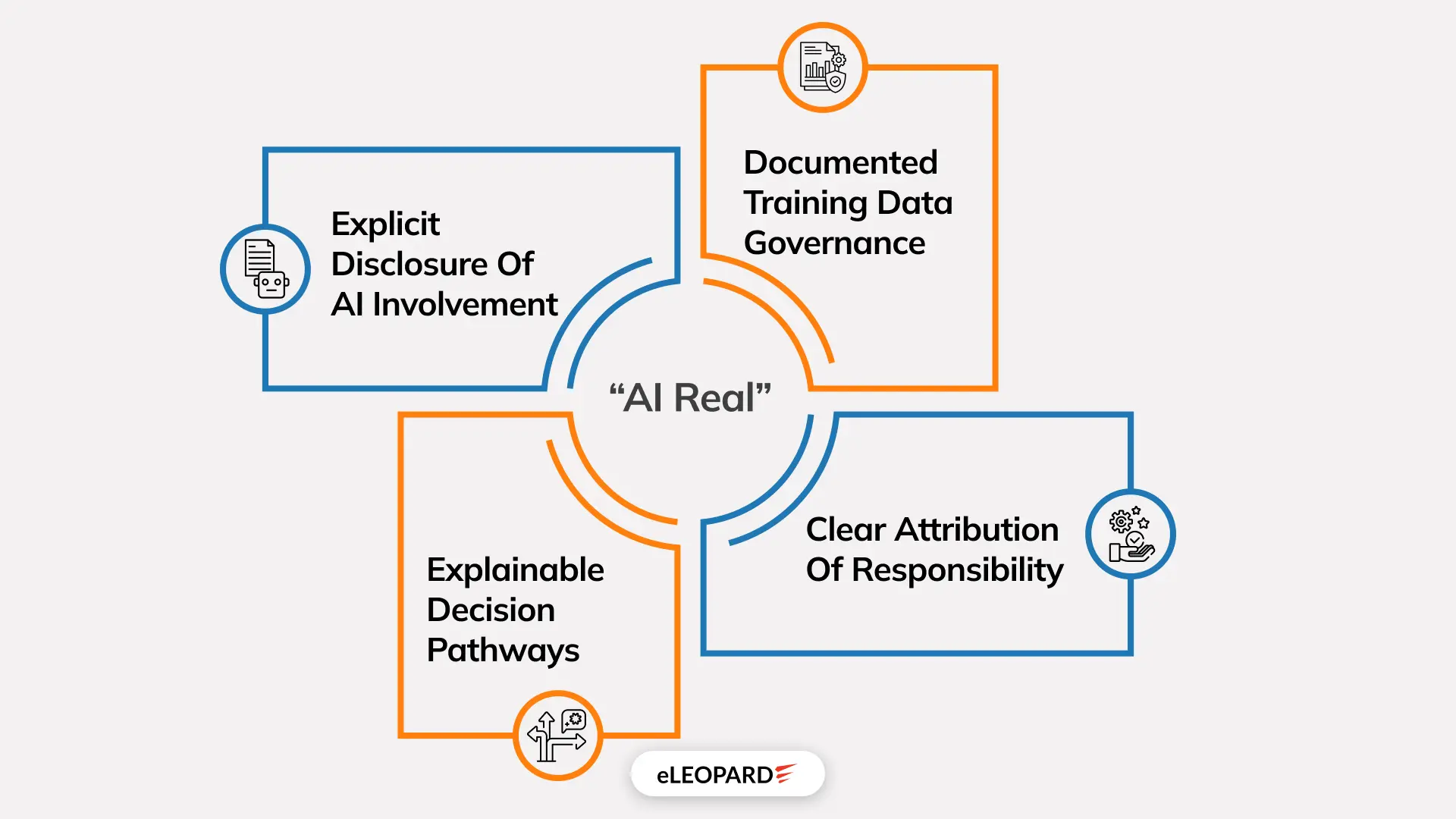

What Does “AI Real” Mean?

Key characteristics of real AI include:

- Explicit disclosure of AI involvement

- Documented training data governance

- Explainable decision pathways

- Clear attribution of responsibility

In this sense, real AI is not defined by realism but by institutional trustworthiness. Authenticity becomes a property of systems, not sensory perception.

AI’s Role in Enhancing Real-World Applications

When aligned correctly, AI functions as a force multiplier for human capability. In medicine, AI augments diagnostic accuracy by synthesizing patterns across millions of patient records. In climate science, AI models simulate complex systems at resolutions previously computationally infeasible. In engineering, AI-driven optimization reduces waste, energy consumption, and error rates.

These applications share common traits: constrained deployment, domain-specific training, and rigorous validation. Importantly, success is measured not by believability but by outcome reliability. This stands in stark contrast to fake AI, where impact is measured by persuasion rather than performance.

Domains Where “Real AI” Thrives

Healthcare

- Diagnostic augmentation

- Predictive risk modeling

Climate & Environment

- Weather forecasting

- Resource optimization

Industry

- Predictive maintenance

- Supply chain intelligence

Education

- Adaptive learning systems

- Personalized tutoring

These systems prioritize accuracy over persuasion.

The Role of Humans in AI-Created Content

Despite increasing autonomy, AI systems remain fundamentally dependent on human agency. Humans design objectives, curate training data, define reward functions, and determine deployment contexts. As such, every AI-generated artifact reflects a chain of human decisions.

The notion that AI “creates independently” obscures responsibility. Ethical accountability does not disappear with automation—it becomes distributed. Designers, deployers, and users all share responsibility for downstream effects.

In the age of synthetic media, human judgment becomes more valuable, not less. Interpretation, contextualization, and ethical reasoning remain uniquely human functions.

The Ethics of AI-Generated Content

Ethical challenges surrounding AI-generated content extend beyond misuse into structural concerns. Training models on scraped data raises issues of consent and intellectual property. Synthetic replication of human likeness challenges notions of identity ownership. Algorithmic amplification risks reinforcing bias and inequality.

Ethical AI governance requires more than voluntary guidelines. It demands enforceable standards, cross-border cooperation, and continuous oversight. Without ethical infrastructure, technological capability will continue to outpace moral restraint.

How to Identify Fake vs Real AI?

Historically, humans relied on sensory cues to assess authenticity. Blurred edges, unnatural lighting, or awkward phrasing once signaled manipulation. In the era of advanced generative AI, these cues have lost diagnostic value. Modern AI systems are optimized precisely to eliminate such perceptual artifacts.

As a result, the identification of fake versus real AI has shifted from perceptual evaluation to verification-based reasoning. Authenticity is no longer something we “see”; it is something we confirm.

Ask:

- Who created this?

- Can the source be verified?

- Is metadata intact?

- Does independent confirmation exist?

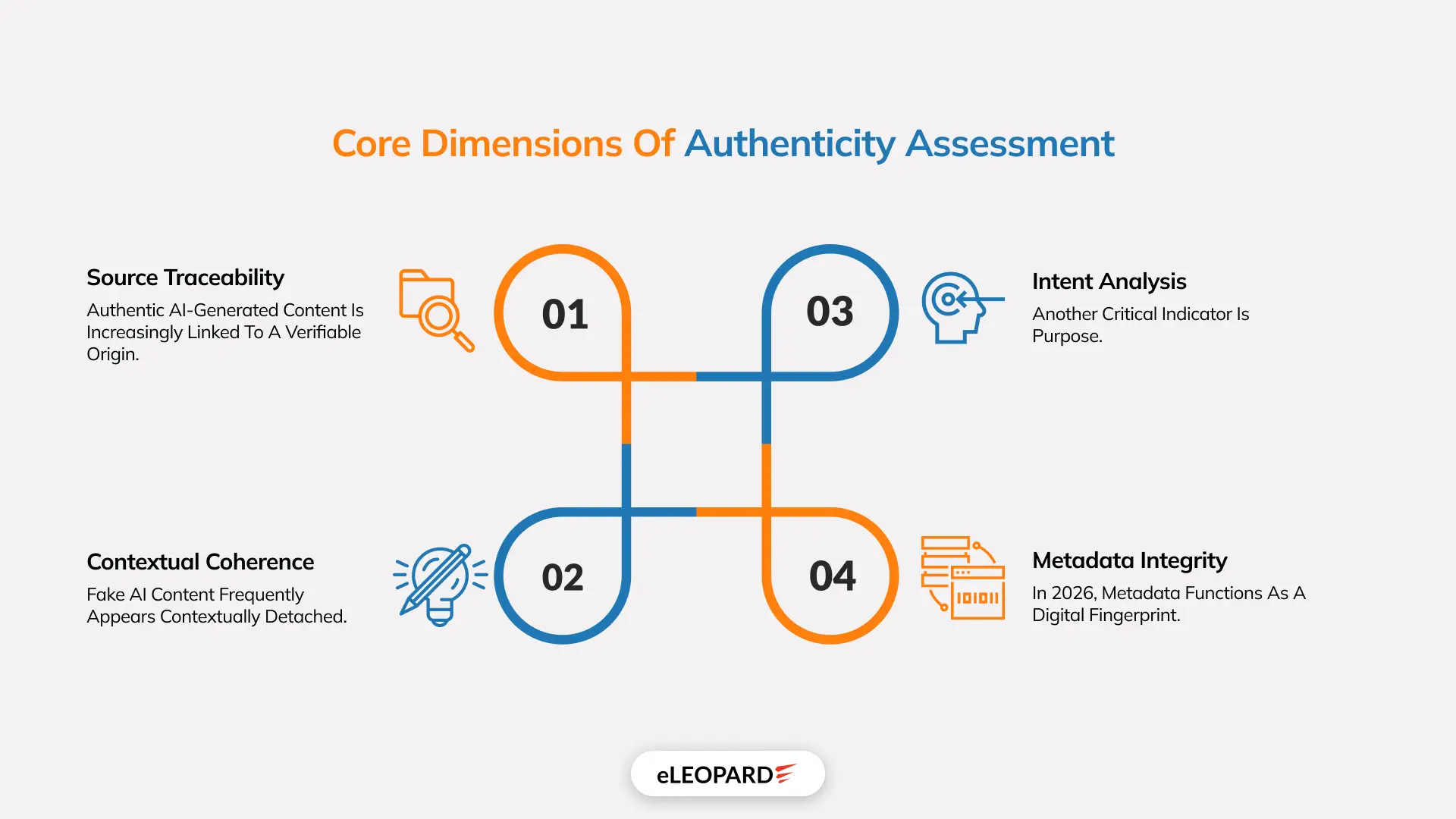

Core Dimensions of Authenticity Assessment

1. Source Traceability

Authentic AI-generated content is increasingly linked to a verifiable origin. This includes:

- Identifiable creators or institutions

- Documented creation tools

- Time-stamped generation records

Fake AI often lacks a consistent origin story or relies on anonymous, disposable accounts designed to evade accountability.

2. Contextual Coherence

Fake AI content frequently appears contextually detached. While the content itself may be realistic, it often:

- Appears without corroboration from trusted sources

- Emerges suddenly during high-impact events

- Lacks alignment with established factual timelines

Real AI content tends to exist within a broader informational ecosystem where claims can be cross-validated.

3. Intent Analysis

Another critical indicator is purpose. Fake AI is typically engineered to:

- Provoke emotional reactions

- Polarize audiences

- Exploit urgency or outrage

Real AI applications prioritize utility, clarity, or problem-solving over emotional manipulation.

4. Metadata Integrity

In 2026, metadata functions as a digital fingerprint. Authentic content increasingly contains:

- Embedded generation signatures

- Device or model identifiers

- Cryptographic hashes

Fake AI content often strips or alters metadata to prevent traceability.

Tech Solutions to Combat Fake AI

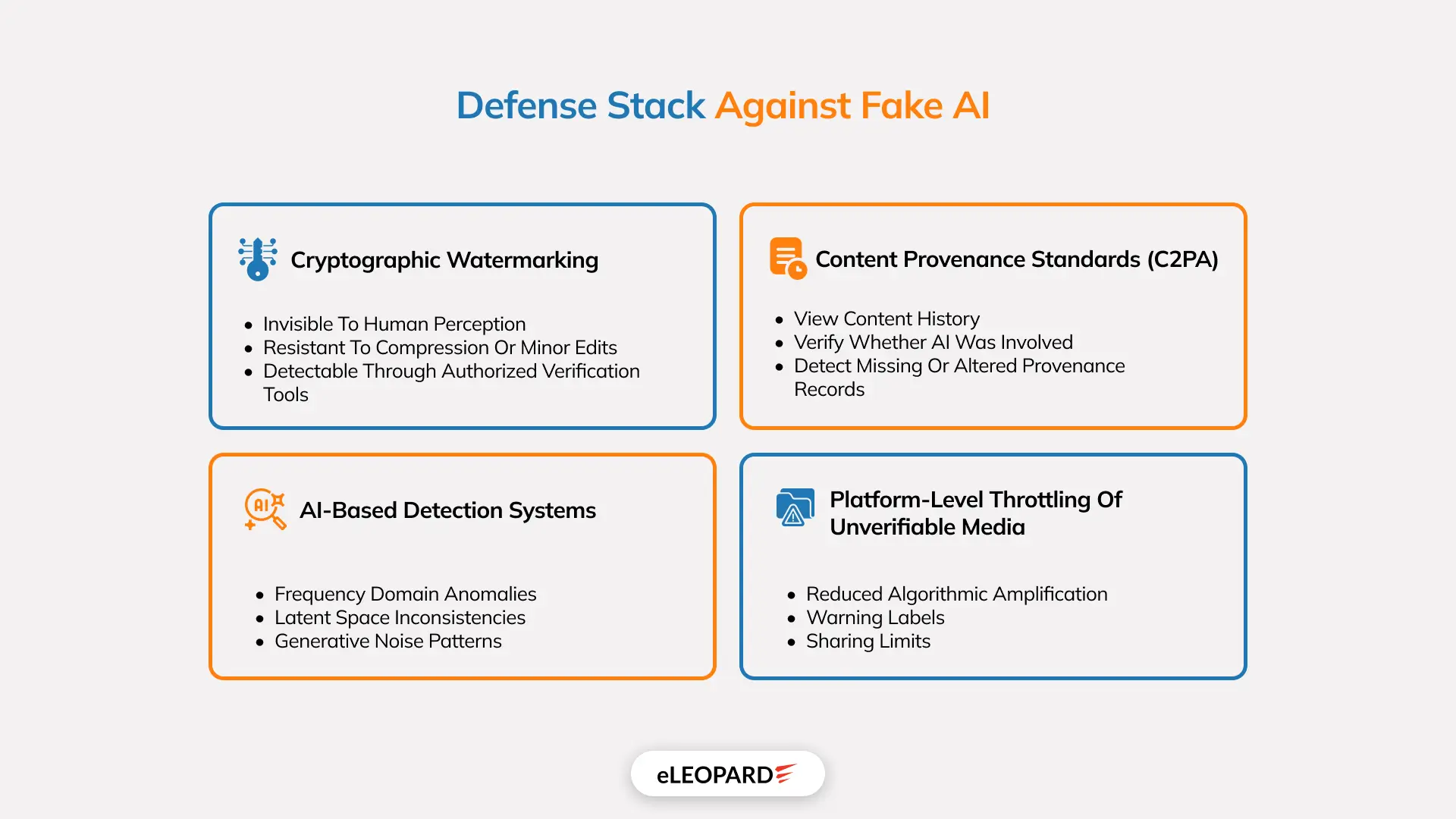

Technological interventions form the first line of defense against fake AI. However, these systems operate within adversarial environments where malicious actors continuously adapt. Each solution raises the cost of deception but does not eliminate it.

1. Cryptographic Watermarking

What It Is

Cryptographic watermarking embeds mathematically verifiable signals directly into AI-generated outputs. Unlike visible watermarks, these signals are:

- Invisible to human perception

- Resistant to compression or minor edits

- Detectable through authorized verification tools

Why It Matters

Limitations

- Watermarks can be degraded through heavy manipulation

- Requires widespread adoption by AI developers

- Verification tools must remain accessible and trusted

2. Content Provenance Standards (C2PA)

Conceptual Foundation

Practical Impact

C2PA allows users to:

- View content history

- Verify whether AI was involved

- Detect missing or altered provenance records

This shifts trust from platforms to verifiable metadata infrastructure.

Structural Challenge

C2PA is effective only when:

- Platforms enforce compliance

- Devices support provenance capture

- Users understand how to interpret signals

3. AI-Based Detection Systems

How Detection Works

Detection models analyze statistical irregularities that are invisible to humans, such as:

- Frequency domain anomalies

- Latent space inconsistencies

- Generative noise patterns

These systems operate probabilistically, assigning likelihood scores rather than binary judgments.

Arms Race Dynamic

Key Weakness

4. Platform-Level Throttling of Unverifiable Media

Rather than outright censorship, many platforms now adopt friction-based controls:

- Reduced algorithmic amplification

- Warning labels

- Sharing limits

Content without provenance is not banned, but it is slowed down.

Why This Works

Risk

Limitation: Why Technology Alone Cannot Win

Every defensive technology creates a corresponding incentive to bypass it. As long as:

- AI tools remain accessible

- Deception remains profitable

- Verification remains optional

Technological solutions will always lag behind innovation in fake AI. Technology does not solve the problem; it buys time for institutional, legal, and cultural adaptation.

Public Awareness and Media Literacy

Core Skills of AI-Era Literacy

- Understanding how generative AI works

- Recognizing emotional manipulation tactics

- Evaluating source credibility

- Demanding provenance and verification

Education as a Security Layer

Public awareness functions as a non-technical firewall. Research consistently shows that informed individuals are:

- Less likely to share synthetic misinformation

- More resistant to outrage-based manipulation

- More capable of evaluating ambiguous content

This makes education not merely a social good, but an infrastructure for democratic stability.

Cultural Shift: Skepticism Without Cynicism

Real AI at eLEOPARD: Building Trustworthy AI That Works in the Real World

At eLEOPARD, we don’t build AI to imitate reality, we build AI to operate within it responsibly, transparently, and effectively. Our AI services are designed around verifiable outcomes, ethical deployment, and measurable business value, aligning with what “real AI” truly means in practice.

Unlike deceptive or black-box AI systems, eLEOPARD’s AI solutions are grounded in accountability, explainability, and real-world constraints. Every model we deploy is purpose-built, domain-specific, and governed by clear human oversight.

What Makes eLEOPARD’s AI “Real”?

Our approach to AI prioritizes substance over spectacle:

- Transparent AI Usage : We explicitly disclose where and how AI is used, no hidden automation, no misleading representations.

- Domain-Specific Intelligence : Our AI systems are trained and validated within defined business, industrial, or operational contexts, not generic datasets optimized for persuasion.

- Explainable & Auditable Models : Decisions made by our AI can be traced, interpreted, and challenged, ensuring trust across stakeholders.

- Human-in-the-Loop Governance : AI at eLEOPARD augments human expertise rather than replacing accountability. Humans remain responsible for outcomes.

Our Position on Fake vs Real AI

eLEOPARD does not engage in deceptive AI practices such as:

- Non-consensual synthetic media

- Identity impersonation

- Manipulative deepfake technologies

- Unverifiable or opaque AI outputs

We believe real AI earns trust through clarity, not illusion.

As fake AI accelerates epistemic erosion, organizations have a responsibility to deploy AI that strengthens confidence rather than undermines it. In an era where seeing is no longer believing, real AI is defined by governance, intent, and impact. That is the standard eLEOPARD builds by.

Closing Insight

The battle between fake and real AI will not be decided by algorithms alone. It will be shaped by how societies:

- Design verification systems

- Regulate platforms

- Educate citizens

- Enforce accountability

Technology builds the tools.

Humans decide whether truth survives.

Conclusion

The boundary between AI fake and AI real is not determined by algorithms, but by governance, ethics, and collective responsibility. Artificial intelligence has amplified humanity’s capacity to create, and to deceive. The defining question of this era is not whether AI can fabricate reality, but whether society can preserve truth in its presence.

The future of AI will be shaped not by its sophistication, but by the values that constrain it.